How to Calculate Revenue Lost to AI Recommendations: A B2B SaaS Framework

May 4, 2026 · Citedge

Learn how B2B SaaS teams can estimate revenue lost when AI search engines recommend competitors instead of their brand.

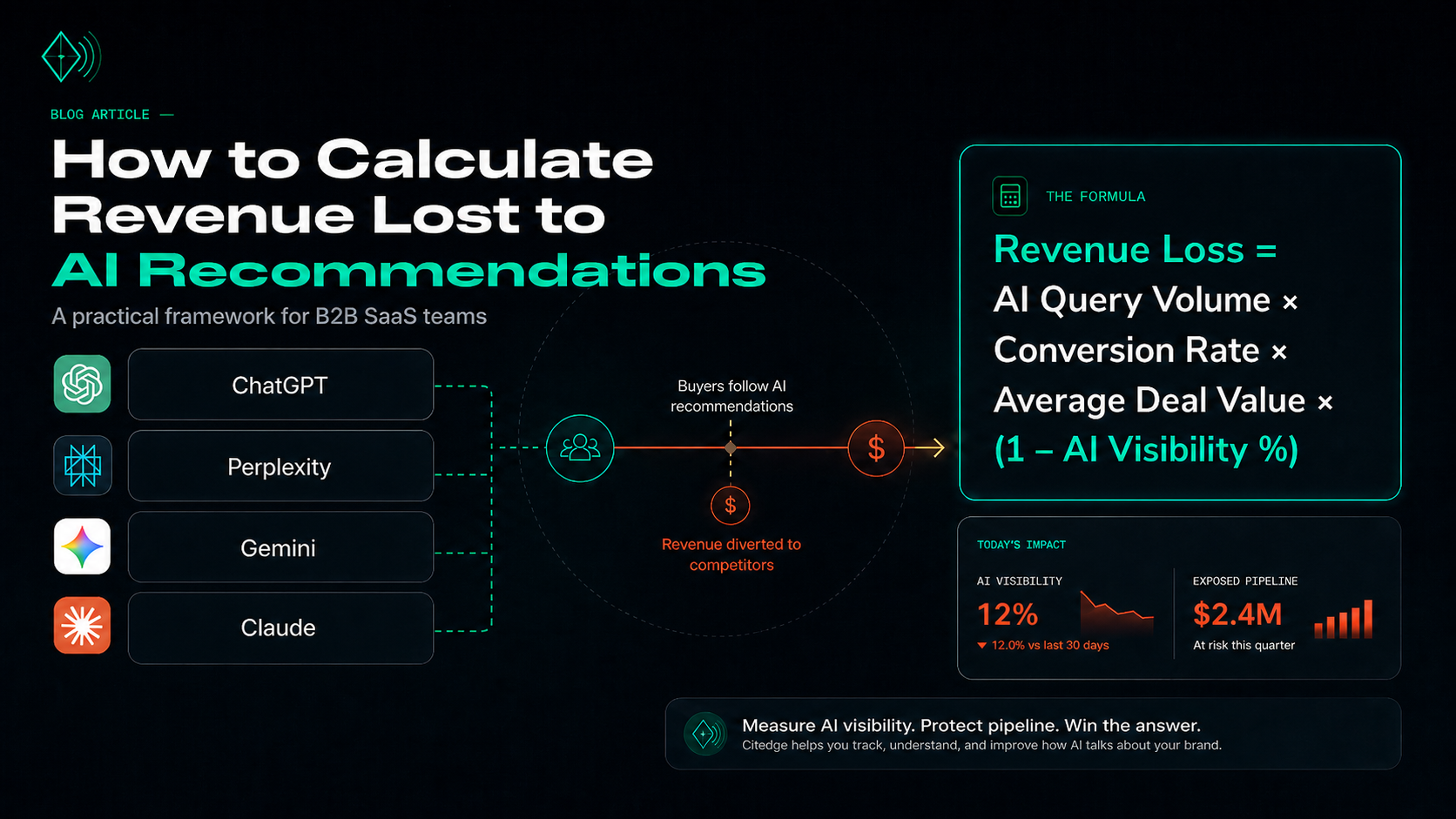

AI recommendations now influence which B2B SaaS vendors buyers discover, compare, and shortlist. If ChatGPT, Perplexity, Gemini, or Claude recommends your competitor instead of your product, that buyer may never visit your website, enter your funnel, or appear in your CRM.

That creates a new measurement problem: how do you calculate revenue lost to AI recommendations when the lost buyer never clicks?

The answer is not perfect attribution. It is a practical revenue exposure model.

You estimate the commercial value of the queries where buyers are asking AI systems for vendor recommendations, measure how often your brand appears compared with competitors, and calculate the pipeline you are likely missing when your product is absent, misrepresented, or ranked below alternatives.

Concise answer:

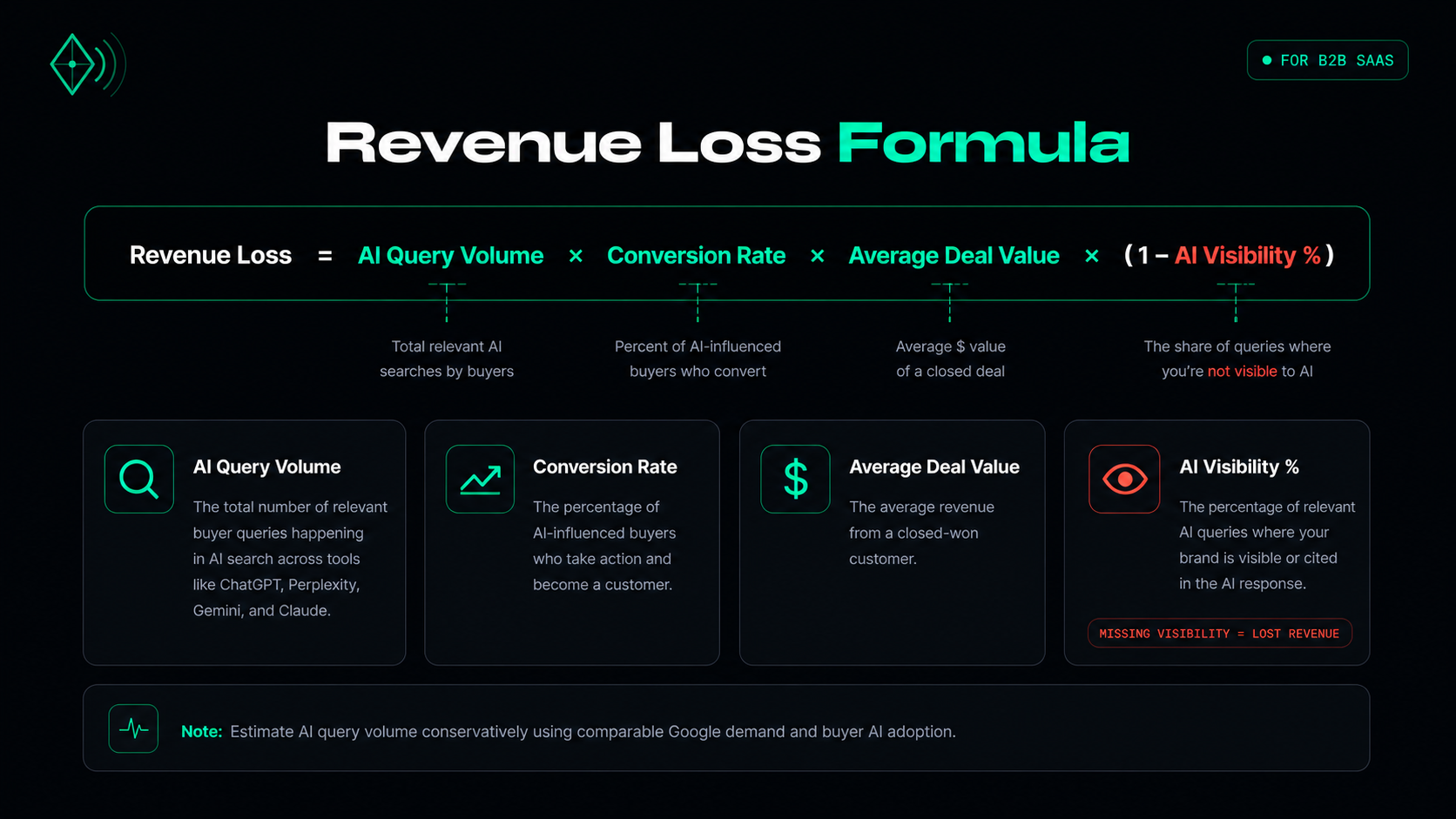

To calculate revenue lost to AI recommendations, multiply estimated AI query volume by conversion rate, average deal value, and the percentage of responses where your brand is not visible. The formula is: Revenue Loss = AI Query Volume × Conversion Rate × Average Deal Value × (1 – AI Visibility %).

| Quick Reference | What to Use |

|---|---|

| Core formula | Revenue Loss = AI Query Volume × Conversion Rate × Average Deal Value × (1 – AI Visibility %) |

| Best for | B2B SaaS teams estimating pipeline exposure from AI-driven buyer discovery |

| Primary outputs | Exposed pipeline by query, by model, and by failure mode |

| Priority action | Improve visibility first on highest-intent prompts with highest estimated revenue exposure |

This framework is especially useful for B2B SaaS teams because buyers increasingly research vendors before speaking with sales. Gartner reported that 45% of surveyed B2B buyers used AI during a recent purchase, and Forrester has reported that business buyers increasingly treat generative AI and conversational search as meaningful buying information sources.

For SaaS companies, that means AI search visibility is no longer just a brand metric. It is a pipeline metric.

What Does “Revenue Lost to AI Recommendations” Mean?

Revenue lost to AI recommendations is the estimated pipeline or sales opportunity your company misses when AI systems recommend competitors, omit your product, or describe your brand inaccurately during high-intent buyer research.

This does not mean every missing AI mention equals a lost deal. It means there is measurable revenue exposure.

For example, a buyer might ask:

- “Best lead scoring software for B2B SaaS”

- “Top AI SEO tools for startups”

- “Citedge alternatives”

- “Best customer success platforms for mid-market SaaS”

- “Which CRM enrichment tool is best for enterprise teams?”

If the AI response lists three competitors and not your brand, your company may never enter the buyer’s evaluation set.

That is the invisible loss.

Traditional analytics tools only measure what happens after a user reaches your site. AI recommendations often happen before that point, inside a third-party answer engine. GA4 can track some AI referral traffic, but it does not fully capture AI-influenced discovery because many visits appear as direct traffic or never produce a visit at all.

Why AI Recommendations Matter for B2B SaaS Revenue

B2B SaaS buying has always involved dark-funnel research: review sites, communities, analyst reports, LinkedIn posts, podcasts, and peer recommendations. AI search adds a new layer.

Instead of reading ten pages, a buyer can ask an AI assistant for a shortlist.

That answer may summarize:

- Vendor names

- Category leaders

- Best-fit use cases

- Pricing impressions

- Pros and cons

- Competitor comparisons

- Third-party citations

- Review-site sentiment

- Reddit discussions

- Documentation and product pages

The commercial risk is simple: AI systems can shape the shortlist before your sales or marketing team ever gets a chance to influence the buyer.

6sense’s 2025 B2B Buyer Experience Report also notes that buyers often complete a substantial portion of the journey before engaging sellers, reinforcing why pre-site and pre-sales visibility matters.

Why It Matters

If your brand is missing from AI-generated recommendations, you may lose:

- Category awareness

- Comparison visibility

- Branded alternative searches

- High-intent demo demand

- Competitive positioning

- Pipeline from buyers who never click

Summary: AI recommendations matter because they influence vendor discovery before website analytics, retargeting, lead capture, or sales outreach can measure the buyer.

Key Definitions

AI Search Visibility

AI search visibility is the percentage of relevant AI responses where your brand appears for tracked buyer-intent prompts.

Example:

If you track 100 prompts across ChatGPT, Perplexity, Gemini, and Claude, and your brand appears in 28 responses, your AI visibility is 28%.

AI Recommendation Share

AI recommendation share measures how often your brand is actively recommended, not merely mentioned.

A brand mention is weaker than a recommendation. For example:

- Mention: “Other tools in this category include Citedge.”

- Recommendation: “Citedge is best for B2B SaaS teams that need AI visibility tracking.”

Revenue Exposure

Revenue exposure is the estimated value of potential pipeline influenced by AI responses where your brand is absent or disadvantaged.

Failure Mode

A failure mode explains why your brand is losing visibility. Common examples include not being mentioned, being mentioned but not recommended, or being recommended for the wrong use case.

How to Calculate Revenue Lost to AI Recommendations

Use this formula:

Revenue Loss = Estimated AI Query Volume × Conversion Rate × Average Deal Value × (1 – AI Visibility %)

Each variable should be estimated carefully.

| Variable | Meaning | Example |

|---|---|---|

| Estimated AI query volume | Monthly AI searches for a high-intent query | 4,200 |

| Conversion rate | Expected conversion from this intent tier | 2.5% |

| Average deal value | ACV or expected customer value | $14,000 |

| AI visibility % | How often your brand appears in relevant AI responses | 18% |

Worked Example

Imagine a mid-market lead scoring SaaS company wants to estimate lost revenue from one high-intent query:

Query: “best lead scoring software”

Estimated monthly AI query volume: 4,200

Conversion rate: 2.5%

Average contract value: $14,000

AI visibility: 18%

Revenue Loss = 4,200 × 0.025 × $14,000 × (1 – 0.18)

Revenue Loss = 4,200 × 0.025 × $14,000 × 0.82

Revenue Loss = $1,205,400 in exposed monthly pipeline

This is not guaranteed closed revenue. It is exposed pipeline: the potential opportunity value of AI-influenced demand where your brand is not sufficiently visible.

A realistic recovery target might be smaller, such as 20–40% of exposed pipeline, depending on category maturity, brand strength, deal cycle length, and how quickly you can improve AI visibility.

Summary: The formula gives your team a defensible starting point for budget conversations. It turns “we are invisible in ChatGPT” into “we have $X in AI-influenced pipeline exposure.”

Step 1: Identify Your High-Value Query Categories

Start with the queries that have the strongest buyer intent.

For most B2B SaaS companies, these include:

- “Best [category] software”

- “[Competitor] alternatives”

- “[Your brand] vs [competitor]”

- “Top [category] tools for [audience]”

- “Best [use case] platform”

- “How to choose [category] software”

- “[Category] software for enterprise”

- “[Category] software for startups”

Prioritize comparison, alternative, and category queries over broad educational keywords.

For example, “what is lead scoring?” may attract top-of-funnel researchers. But “best predictive lead scoring software for B2B SaaS” is more likely to influence vendor selection.

Best For

This step is best for:

- Demand generation teams

- Product marketers

- SEO teams

- RevOps leaders

- Founders in competitive SaaS categories

- Agencies managing SaaS positioning

Practical Tip

Pull your starting query list from:

- Google Search Console

- Paid search terms

- Sales call transcripts

- Demo request forms

- Competitor comparison pages

- Review-site categories

- Customer interview language

- Internal site search

- AI prompts your buyers are likely to ask

Step 2: Establish Baseline AI Visibility

Once you have your query set, test each prompt across the major AI answer engines your buyers may use.

At minimum, track:

- ChatGPT

- Perplexity

- Gemini

- Claude

For each prompt, record:

- Whether your brand appears

- Which competitors appear

- Where your brand appears in the response

- Whether your brand is recommended or merely mentioned

- What sources are cited or referenced

- Whether the positioning is accurate

- Whether the answer includes outdated or incorrect claims

Doing this manually is possible for a small sample, but it becomes difficult quickly.

For example:

30 prompts × 4 AI models × weekly tracking = 120 checks per week

120 checks × 4 weeks = 480 checks per month

That is before adding screenshots, source tracking, trend analysis, or revenue modeling.

Citedge is built for this use case: it monitors ChatGPT, Perplexity, Gemini, and Claude recommendations, tracks competitor mentions, and attributes revenue exposure per query.

Step 3: Estimate AI Query Volume

There is no public equivalent of Google Search Console for ChatGPT, Claude, Gemini, or Perplexity query volume.

So your goal is not exact volume. Your goal is a reasonable estimate.

A practical approach:

- Start with Google Search Console impressions for the equivalent query.

- Adjust based on how likely your audience is to use AI tools during research.

- Apply a conservative AI adoption multiplier.

- Revisit the estimate quarterly.

For B2B SaaS, you might model scenarios instead of relying on one number:

| Scenario | AI Volume Estimate |

|---|---|

| Conservative | 15–25% of comparable Google query volume |

| Moderate | 25–45% of comparable Google query volume |

| Aggressive | 45%+ of comparable Google query volume |

Use the conservative scenario for CFO-facing forecasts unless you have your own AI referral, survey, or attribution data.

Why Scenarios Work Better Than One Estimate

AI search behavior varies by category. Technical buyers, marketers, operators, and founders may use AI tools differently. Scenario modeling prevents overconfidence and makes the business case more credible.

Step 4: Calculate Revenue Exposure by Query

Now apply the formula query by query.

Revenue Exposure = Estimated AI Query Volume × Conversion Rate × Average Deal Value × Missing Visibility %

Where:

Missing Visibility % = 1 – AI Visibility %

Example query-level table:

| Query | AI Volume | Conv. Rate | ACV | AI Visibility | Exposed Pipeline |

|---|---|---|---|---|---|

| best lead scoring software | 4,200 | 2.5% | $14,000 | 18% | $1,205,400 |

| predictive lead scoring tools | 1,800 | 2.0% | $14,000 | 25% | $378,000 |

| lead scoring software for SaaS | 900 | 3.0% | $14,000 | 10% | $340,200 |

This format helps teams decide where to act first.

A query with lower search volume may still matter if it has higher buying intent, stronger conversion rate, or larger deal value.

Step 5: Segment Loss by Failure Mode

A single AI visibility score is not enough.

You need to know why you are losing.

Failure Mode 1: Not Mentioned at All

The AI system does not include your brand in the answer.

Likely causes:

- Weak third-party mentions

- Limited category association

- Thin comparison content

- Poor review-site presence

- Lack of authoritative citations

- Unclear homepage positioning

How to fix it:

- Publish clearer category pages

- Build comparison content

- Earn third-party mentions

- Improve review-site profiles

- Strengthen structured product information

- Get included in relevant “best tools” lists

Failure Mode 2: Mentioned but Not Recommended

The AI system knows your brand exists but does not position it as a leading option.

Likely causes:

- Competitors have stronger third-party validation

- Your use case is unclear

- Review sentiment favors competitors

- Your content does not answer buyer comparison questions

How to fix it:

- Create use-case-specific pages

- Improve comparison pages

- Address objections directly

- Add customer proof

- Build more entity-rich content around your category

Failure Mode 3: Recommended With Wrong Positioning

The AI system recommends your product, but for the wrong audience or use case.

Example:

A platform built for B2B SaaS is described as best for ecommerce brands.

How to fix it:

- Rewrite homepage positioning

- Clarify target customer pages

- Update old blog content

- Add schema markup

- Align review-site descriptions

- Correct inconsistent third-party profiles

Failure Mode 4: Recommended Unfavorably

Your brand appears, but the model says a competitor is better.

Example:

“Citedge is useful for basic tracking, but [competitor] is better for enterprise analytics.”

How to fix it:

- Identify the source of the comparison

- Publish a direct comparison page

- Address the perceived weakness

- Update product documentation

- Add proof points, case studies, and feature evidence

Summary: Failure-mode analysis turns AI visibility from a vague score into an execution plan.

Tool Comparison: AI Visibility vs Traditional Analytics

| Capability | Citedge | Google Analytics | SimilarWeb | Ahrefs | Semrush |

|---|---|---|---|---|---|

| Tracks ChatGPT recommendations | Yes | No | No | Limited / indirect | Limited / indirect |

| Tracks Perplexity citations | Yes | No | No | Limited / indirect | Limited / indirect |

| Tracks Gemini visibility | Yes | No | No | Limited / indirect | Limited / indirect |

| Tracks Claude recommendations | Yes | No | No | No | No |

| Measures off-site AI visibility | Yes | No | Partial | Partial | Partial |

| Shows competitor recommendation share | Yes | No | No | No | No |

| Estimates revenue exposure by query | Yes | No | No | No | No |

| Helps identify cited sources | Yes | No | Partial | Partial | Partial |

Traditional SEO and analytics tools still matter. You need Google Search Console, GA4, paid search data, CRM data, and SEO tools to understand demand and conversion. But they do not fully answer the AI visibility question.

GA4 can be configured to identify some AI referral traffic, but AI-influenced discovery is broader than referral tracking because many AI sessions do not send a clean referrer and many recommendations never produce a click.

Where Citedge Fits in the Workflow

Citedge fits between SEO analytics, competitive intelligence, and revenue attribution.

Use it to answer questions like:

- Does ChatGPT recommend us for our highest-intent category queries?

- Which competitors appear more often than we do?

- Does Perplexity cite our website or third-party sources?

- Are AI systems describing our product accurately?

- Which prompts represent the highest revenue exposure?

- Are we improving or declining week over week?

Citedge scans ChatGPT, Perplexity, Gemini, and Claude, tracks competitors, provides query-level revenue attribution, and includes prompt coverage across pricing tiers.

Best For

Citedge is best for B2B SaaS teams that want to:

- Monitor AI recommendation share

- Measure brand visibility in answer engines

- Identify competitor advantages in AI search

- Connect AI visibility to revenue exposure

- Prioritize SEO, content, PR, and review-site work

- Track improvements over time

Common Mistakes When Modeling AI Revenue Loss

Mistake 1: Treating AI Search Volume as Purely Additive

AI search is partly substitutional. Some users who ask ChatGPT today would have searched Google in the past.

Do not simply add AI volume on top of Google volume without adjustment. That can inflate your forecast.

Mistake 2: Tracking Only One AI Model

ChatGPT, Perplexity, Gemini, and Claude can produce different recommendations. Tracking only one model may create a false sense of confidence.

A brand might perform well in Perplexity but poorly in ChatGPT. The fix may differ by model because sources, citations, and answer formats vary.

Mistake 3: Measuring Mentions Instead of Recommendations

A mention is not the same as a recommendation.

The real question is not only “Did the model name us?” It is:

- Did it recommend us?

- For the right use case?

- Against which competitors?

- With what evidence?

- In what ranking position?

- With what caveats?

Mistake 4: Ignoring Branded Comparison Prompts

Branded prompts often carry high intent.

Track prompts like:

- “[Your brand] vs [competitor]”

- “Is [your brand] worth it?”

- “[Your brand] alternatives”

- “Best alternatives to [competitor]”

- “What is better than [your brand]?”

These prompts may happen late in the buying journey.

Mistake 5: Using One Visibility Score for Every Query

Averaging everything hides the real problem.

You need visibility by:

- Query

- Model

- Competitor

- Use case

- Funnel stage

- Region, if relevant

- Product line, if relevant

How to Improve AI Search Visibility After Measuring It

Measurement is only useful if it leads to action.

To improve AI visibility, focus on the sources and signals AI systems are likely to use when generating buyer recommendations.

1. Strengthen Category Pages

Your website should clearly explain:

- What category you belong to

- Who your product is for

- What use cases you solve

- How you differ from alternatives

- What integrations, pricing, and workflows matter

2. Publish Comparison Content

Create honest comparison pages for:

- Your product vs competitors

- Competitor alternatives

- Best tools by use case

- Best tools by company size

- Best tools by industry

Avoid shallow “we are the best” pages. AI systems are more likely to extract useful comparisons from content that includes real decision criteria.

3. Improve Third-Party Mentions

AI answers often lean on sources beyond your website.

Work on:

- Review sites

- Partner pages

- Guest posts

- Podcasts

- Analyst mentions

- Reddit discussions

- Community answers

- Product directories

- Industry newsletters

4. Keep Product Information Consistent

Inconsistent descriptions confuse AI systems.

Make sure your positioning is aligned across:

- Homepage

- About page

- Product pages

- Help docs

- LinkedIn profile

- G2 or Capterra profiles

- Partner listings

- Press releases

- Comparison pages

5. Refresh Outdated Content

AI systems may surface outdated claims if old pages are still indexed or cited.

Update:

- Old pricing references

- Old feature descriptions

- Old competitor comparisons

- Deprecated product names

- Outdated screenshots

- Old customer segments

Frequently Asked Questions

How do you calculate revenue lost to AI recommendations?

Calculate revenue lost to AI recommendations by multiplying estimated AI query volume by conversion rate, average deal value, and the percentage of AI responses where your brand is not visible. The formula is: Revenue Loss = AI Query Volume × Conversion Rate × Average Deal Value × (1 – AI Visibility %).

What is AI search visibility?

AI search visibility is the percentage of relevant AI-generated answers where your brand appears for buyer-intent prompts. It can be measured across tools such as ChatGPT, Perplexity, Gemini, and Claude. Strong visibility means your brand appears consistently and is positioned accurately.

Can Google Analytics track AI recommendations?

Google Analytics can track some AI referral traffic when a user clicks through and referral data is passed. However, it cannot fully track AI recommendations that happen off-site, produce no click, or arrive without clear attribution. This makes AI visibility monitoring separate from standard website analytics.

Which AI models should B2B SaaS companies monitor?

B2B SaaS companies should monitor ChatGPT, Perplexity, Gemini, and Claude because buyers may use different AI tools for research, comparison, summarization, and vendor shortlisting. Tracking only one model can hide major visibility gaps.

What is a good AI visibility score?

A good AI visibility score depends on category maturity and competition. For a competitive B2B SaaS category, moving from low visibility to 30–50% visibility across priority prompts can be meaningful. The best target is not a universal benchmark; it is improvement on the prompts with the highest revenue exposure.

How often should AI visibility be measured?

AI visibility should be measured at least weekly for high-intent category and comparison prompts. AI-generated answers can change as models, retrieval systems, citations, reviews, and competitor content change.

What causes a SaaS brand to be missing from AI recommendations?

Common causes include weak third-party mentions, unclear positioning, limited comparison content, poor review-site presence, outdated product pages, and stronger competitor signals. The fix depends on whether the brand is absent, mentioned but not recommended, mispositioned, or unfavorably compared.

How can Citedge help measure AI revenue exposure?

Citedge helps B2B SaaS teams monitor how AI systems recommend their brand versus competitors across major AI models. It can be used to track prompt-level visibility, competitor mentions, cited sources, and estimated revenue exposure from missing or unfavorable recommendations.

Conclusion

To calculate revenue lost to AI recommendations, you need to connect three things: buyer-intent prompts, AI visibility, and revenue value.

Start with the queries that matter most to your pipeline. Measure whether ChatGPT, Perplexity, Gemini, and Claude recommend your brand or your competitors. Then apply a revenue exposure formula so your team can prioritize the prompts, sources, and failure modes that matter most.

AI search visibility is not replacing SEO. It is becoming a new layer of SEO, competitive intelligence, and revenue strategy.

Try Citedge free to see which AI recommendations mention your brand, which competitors appear instead, and which prompts may represent the biggest revenue opportunity.